- Uncovering AI

- Posts

- 🤖 GPT-5.5, 20k layoffs, and a man who cried wolf

🤖 GPT-5.5, 20k layoffs, and a man who cried wolf

Plus: the anti-Grammarly tool going viral for making your emails worse

My fellow AI explorers

We had a government official fired after four days on the job, 20,000 tech workers who got their walking papers at Meta and Microsoft, a viral Chrome extension that makes your emails worse on purpose, and a man in South Korea potentially heading to prison over a wolf photo. And, oh by the way, OpenAI quietly dropped GPT-5.5.

In today’s edition:

🤖 GPT-5.5 is here, and it wants to do your whole job, not just help with it

💼 20,000 tech layoffs at Meta & Microsoft confirm the AI labor shift is now

🏛️ Anthropic's messy D.C. moment: a fired hire, a political feud, and what it means for AI governance

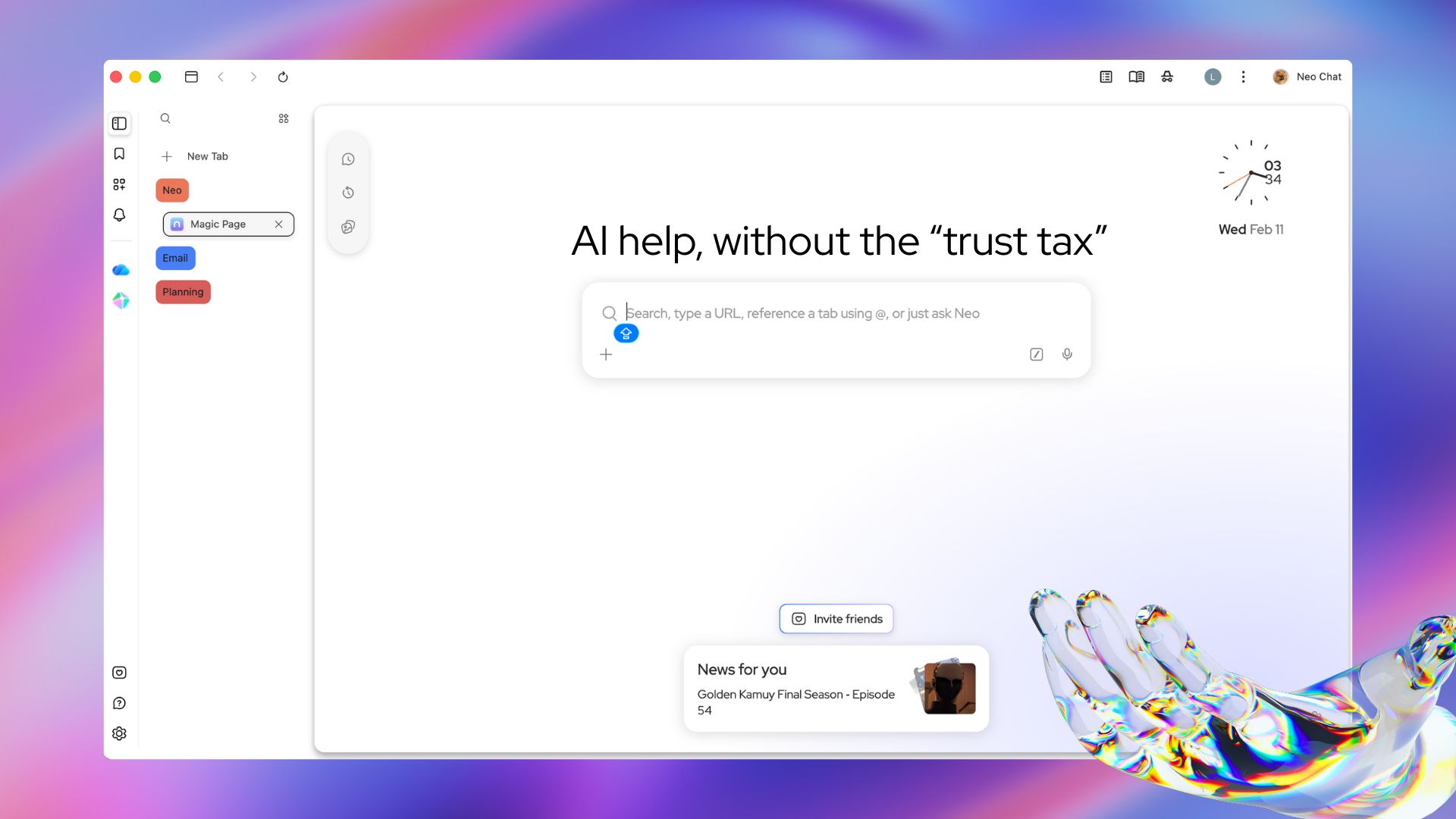

AI help, without the trust tax.

Most AI tools ask you to trade your data for intelligence. Norton Neo doesn't. It's the first safe AI-native browser built by Norton, and it gives you powerful built-in AI without handing your privacy over to get it. Search, summarize, and write with AI built directly into your browser. Your data stays yours. Your context stays private.

Built-in VPN, anti-fingerprinting, and ad blocking come standard. No add-ons. No setup. No compromises.

Fast. Safe. Intelligent. That's Neo.

OpenAI

GPT-5.5: OpenAI's Bet on Doing the Work, Not Just Answering Questions

OpenAI released GPT-5.5, describing it as their smartest and most intuitive model yet, and more importantly, the next step toward a new way of getting work done on a computer.

But here's what makes this release different from every GPT drop before it:

GPT-5.5 excels at writing and debugging code, researching online, analyzing data, creating documents and spreadsheets, operating software, and moving across tools until a task is finished.

It can handle multi-step workflows more autonomously with less user input. And despite the jump in capability, OpenAI says GPT-5.5 matches GPT-5.4's response speed in real-world use.

The launch comes less than two months after OpenAI released GPT-5.4. It’s another sign of the breakneck pace of development driving the sector.

The framing is deliberate. OpenAI isn't pitching this as a "better chatbot." They're pitching it as an agent. Instead of carefully managing every step, users can give GPT-5.5 a messy, multi-part task and trust it to plan, use tools, check its work, navigate through ambiguity, and keep going.

OpenAI is also offering the model in two flavors: a standard version and a more capable, significantly pricier edition called GPT-5.5 Pro geared toward business, legal, education, and data science use cases.

🔮 Prediction: This is the clearest signal yet that the real AI battleground isn't smarter answers. It's autonomous execution. The labs that win the next 18 months will be the ones whose models can complete multi-step workflows without hand-holding. GPT-5.5 is OpenAI's opening bid in that race.

Washington D.C.

Anthropic's D.C. Drama: Fired in Four Days

This one reads like a political thriller.

Collin Burns, who previously worked at Anthropic, started work Monday at the Center for AI Standards and Innovation. And, go figure, he was pushed out Thursday by the White House, according to people familiar with the situation.

Here's the situation at a glance:

Officials were concerned about Burns having worked at Anthropic, which has fought bitterly with the Trump administration in recent months. Senior White House figures had not been briefed on his selection in advance.

The researcher's abrupt removal is partly a reflection of the challenge the government faces in recruiting top AI experts. The technology developed by private industry has raced ahead of academia and federal agencies, meaning there are few outsiders who fully understand the field.

The center is the government's primary link to the AI industry and works to assess the national security risks of new models like Anthropic's Mythos system, which the company says has potentially dangerous computer hacking abilities.

For context: in February, Trump instructed the U.S. government to immediately cease using Anthropic's technology after the Pentagon designated Anthropic as a national security supply chain risk. It’s a label typically reserved for organizations from unfriendly nations.

There are glimmers of a thaw, though. Tensions eased after Anthropic CEO Dario Amodei visited the White House recently, with a spokesperson describing the meeting as "productive and constructive."

🔮 Prediction: The Anthropic–White House feud is becoming the defining case study for how government engages with frontier AI labs. Either a framework gets built, or the talent pipeline to federal AI posts dries up entirely. Neither outcome is great for U.S. AI policy.

The Big Picture

20,000 Jobs Gone… and It's Just Getting Started

Meta CEO Mark Zuckerberg and Microsoft CEO Satya Nadella unveiled plans for extensive job reductions this week, potentially exceeding 20,000 positions combined.

The numbers:

Meta told employees it plans to lay off 10% of its workforce — about 8,000 jobs — with cuts beginning on May 20, "all part of our continued effort to run the company more efficiently."

Microsoft offered voluntary retirement to 7% of its U.S. staff, which is the first buyout program in the company's 51-year history.

As of this week, over 92,000 tech workers have been laid off so far in 2026, bringing the total to almost 900,000 since 2020.

What makes this different from past rounds of tech belt-tightening? These aren't measures during an economic downturn. They're structural shifts in how these companies operate. Microsoft's GitHub Copilot now writes 46% of code in repositories where it's deployed. Meta's AI-powered ad platform requires a fraction of the human oversight it needed two years ago.

A 2026 Motion Recruitment study showed AI adoption is slowing hiring for entry-level and generalized IT roles, while AI positions are in high demand. Tech salaries remain largely flat from 2025, with the exception of some specialized jobs like AI engineers.

🔮 Prediction: The ladder into the tech industry is getting pulled up just as the top rungs get more valuable. This is the most important structural story in AI right now, and it's only in its first chapter.

30-Second AI Play

🛠️ Make Your AI Emails Sound Like a Human Wrote Them, Using AI

Here's the meta-play of the week. A new Chrome extension called Sinceerly is going viral for doing the exact opposite of what every writing tool promises. It makes your writing worse… intentionally.

Sinceerly is being pitched as an "anti-Grammarly" Chrome extension that intentionally makes your writing worse by adding typos, simplifying language, and loosening grammar. It’s a response to how easy it is to spot AI-generated text, with overly polished writing now often being flagged as artificial.

Here's how to use it:

Draft your email. Write it yourself or use AI to generate a first draft.

Install Sinceerly from the Chrome Web Store (it's free, uses your own Anthropic API key).

Hit "Humanize" inside your Gmail compose window.

Yellow highlights show every change. Approve and replace with one click.

Pick your mode: "Subtle" for light touch, "Human" for natural prose, or "CEO" for short, punchy emails that feel like they were fired off from a phone with some haste.

The creator, Ben Horwitz, claims he cold-emailed 5 Fortune 500 CEOs after building it. Get this: four replied.

Other Relevant AI News!

🐺 A South Korean man may face up to five years in prison for posting an AI-generated photo of an escaped zoo wolf that was convincing enough to fool city officials, trigger a citywide emergency alert, and derail the actual search operation by as many as nine days. He told police he did it "just for fun."

🚔 London's Metropolitan Police used Palantir's AI in a secret week-long pilot to analyze internal staff data, uncovering hundreds of officers accused of serious misconduct, including fraud, abuse of authority, and false overtime claims. The Met is now considering expanding Palantir's role in criminal investigations. Critics are calling it "automated suspicion."

💼 OpenAI is raiding enterprise software's senior bench. Executives from Salesforce, Snowflake, and Datadog have recently been poached by OpenAI and Anthropic, lured by large compensation packages and the opportunity to bring existing corporate relationships to the AI giants. The talent war just got a white-collar upgrade.

🏭 Siemens launched the Eigen Engineering Agent at Hannover Messe. It’s a purpose-built industrial AI that autonomously executes automation engineering tasks like PLC coding and HMI design, completing workflows two to five times faster than manual alternatives with up to 80% higher solution quality. Physical AI is officially here.

Golden Nuggets

🤖 GPT-5.5 isn't a better assistant. It's a worker. The shift from "answer my question" to "complete my task" is the most important product evolution in AI this year.

💼 The AI labor crisis isn't coming. It's here. 92,000 tech jobs gone in 2026 alone, and the companies doing the cutting are simultaneously pouring billions into AI infrastructure.

🏛️ AI governance is broken in Washington. When the most qualified person for a federal AI role can't keep the job for a week, you have a talent pipeline problem that no policy can fix.

Would love to hear your thoughts on ChatGPT Atlas! Send me your thoughts by replying to this email (yes, I read them all :)

Until our next AI rendezvous,

Anthony | Founder of Uncover AI